A radical idea that resolves many quantum paradoxes suggests there is no objective view of reality. How can the cosmos be stitched together from interlocking perspectives? J R Eyerman/The LIFE Picture Collection/Shutterstock What is now? The nature of the ever-changing present moment has always fascinated me, because there is a paradox at its heart. From a personal perspective, the present is everything: it is the only time we can ever act or choose; the only thing we can ever experience or know. What did you have for breakfast? Where do you hope to go tomorrow? Even our memories and plans are forged in the present; we can only experience them now. And yet, the conventional view of physics is that now, as we usually think of it, doesn’t actually exist at all. In Albert Einstein’s theory of relativity, all time points are equal: any event can be already done or yet to occur, from different points of view. There is no cosmic unfolding through which reality comes to be. This raises a problem for us as thinking, feeling humans. If now is an illusion, then we cannot intervene in that moment to affect the future, because all events and times already exist. There is no gateway through which our in-the-moment thoughts or desires can reach out and change anything. By getting rid of now from the universe, we have lost a key part of ourselves. In writing my book, In Search of Now, I wanted to know if there is another way. Can we reconcile scientific evidence with a cosmos that includes us and the choices we make? The answer, I found, was yes. But only if we are prepared to radically rethink what reality is and who we are. ‘The world is such that you cannot separate yourself from it,’ says Michel Bitbol, a philosopher of physics at the École Normale Superieure in Paris. Quantum paradoxes To see how, let’s start with a classic thought experiment, suggested in the 1970s by renowned physicist John Wheeler. It is beautifully simple, in principle at least, yet it is a vivid demonstration that the universe – and time – may work very differently from how we often assume. Wheeler’s set-up is a variant of the famous double-slit experiment of quantum physics, in which an experimenter’s choice of what to measure determines what they find. Photons are fired at a screen with two slits in it. If physicists don’t observe which route a photon takes, it seems to behave like a wave, spread across both slits. If they do observe, it acts as a particle, passing through just one slit. This is strange enough: a mysterious switch from fuzziness to certainty at just the moment we look (quantum physicists call this ‘collapse’). But Wheeler raised the stakes. He asked what would happen if physicists didn’t decide whether to check a photon’s route until after it had already completed its journey. In the decades since, researchers have repeatedly found just what Wheeler predicted in his delayed-choice experiment: the decision still affects the photon’s path. Variations on the famous double-slit experiment mess with our understanding of time and causality CHRISTIAN KOCH, MICROCHEMICALS/SCIENCE PHOTO LIBRARY Wheeler described this as ‘a strange inversion of the normal order of time’, as if our choices aren’t just influencing the present, but also the past. Physicists have tried to make sense of it, along with other quantum paradoxes, in ingenious ways: suggesting branching realities in which all possibilities already exist in a vast multiverse, or proposing an unseen guiding influence, or so-called pilot wave, that can instantaneously link different parts

Tag: “hallucination”

-

Why the Smartest Startups Are Letting AI Do the Work

The narrative around Artificial Intelligence (AI) and Machine Learning (ML) has shifted fundamentally. We have moved past the initial phase of ‘hype’ and ‘experimentation’—characterized by amusing chatbots and novel image generators—into an era of tangible utility and structural transformation. For startups operating in the landscape of 2026, AI is no longer just a shiny feature to pitch to investors; it is the fundamental engine driving a massive productivity overhaul across the enterprise and consumer economy. From autonomous software engineers that write and test their own code to predictive supply chains that anticipate disruptions before they occur, the new wave of AI startups is not just helping us work faster—it is helping us work smarter. Drawing on recent, high-impact data from industry leaders like EY, the OECD, and the Government of India’s latest strategic AI missions, this article explores how AI & ML startups are reshaping the productivity landscape, defining a new economic reality where efficiency is the default. The Agentic Shift: From ‘Chat’ to ‘Action’ For the last two years, the world was captivated by Generative AI (GenAI), systems that could write poems, draft emails, or summarize reports. However, the real productivity revolution is being driven by a new evolution: Agentic AI. According to the EY ‘AIdea of India’ report, we are witnessing a critical transition from passive ‘chatbots’ to active ‘agents’11. Unlike a standard chatbot that waits for a prompt to answer a question, an AI Agent utilizes reasoning loops to independently plan, reason, and execute complex workflows without constant human hand-holding. The Mechanism of Action A traditional AI might tell you how to file an invoice. An Agentic AI will open your accounting software, read the invoice, match it to the purchase order, verify the tax codes, and file it for you—asking for human approval only if it detects an anomaly. This moves the user from being a ‘doer’ to a ‘reviewer.’ Enterprise Integration: As noted by Mendix, the future of enterprise applications lies in these autonomous agents that can navigate complex legacy systems 2 . For startups, this lowers the barrier to entry for disrupting traditional industries like logistics or insurance, where paperwork has historically been a bottleneck. As noted by Mendix, the future of enterprise applications lies in these autonomous agents that can navigate complex legacy systems . For startups, this lowers the barrier to entry for disrupting traditional industries like logistics or insurance, where paperwork has historically been a bottleneck. Startup Impact: This shift allows lean startups to automate entire departments, such as L1 customer support or basic QA testing. Startups are now deploying ‘digital workers’ that handle repetitive cognitive tasks, freeing up human talent for creative, strategic, and empathetic problem-solving. The Economic Unlock: Productivity by the Numbers The economic implications of this technological shift are staggering. Productivity in this context is not just a buzzword; it is measurable output that drives GDP and operational efficiency. The ‘Automate, Augment, Amplify’ Framework The EY report introduces a compelling framework for understanding this impact: Automate: Tasks that can be fully handled by AI (e.g., data entry, scheduling). Augment: Tasks where AI acts as a copilot, reducing time spent (e.g., coding assistants, legal drafting). Amplify: Tasks where AI enhances human capability, leading to higher quality outcomes (e.g., strategic planning, creative design). Using this lens, the economic data is robust: The India Opportunity: The EY report highlights that GenAI could potentially drive a 2.61% boost in productivity by 2030 in India’s organized sector 11 . Furthermore, the impact on the unorganized sector could reach 2.82% , democratizing efficiency across the socio-economic spectrum. The EY report highlights that GenAI could

-

‘I’ve Been Paying for Voice AI for Two Years. Five Days Ago, a Model Dropped That Made Every Invoice I’ve Paid Feel Like a Mistake.

Member-only story ‘I’ve Been Paying for Voice AI for Two Years. Five Days Ago, a Model Dropped That Made Every Invoice I’ve Paid Feel Like a Mistake.

TADA just changed the economics of voice AI overnight. Here’s what it is, why it matters, and exactly how to run it — before the API providers adjust their pricing

Anup Karanjkar

5 min read

· Just now

Just now

—

Share

This article is for the developer who’s still paying per-character for voice AI. Something just changed.

I’m writing this before the rest of the internet notices.

Press enter or click to view image in full size

You’ve been here before. Someone announces an open-source TTS model. You get excited. You clone the repo, run inference, and the output sounds like a GPS navigator from 2014 reading a ransom note. Or worse — it sounds decent for the first sentence, then starts inventing words that weren’t in your input. Phantom syllables. Skipped paragraphs. The kind of hallucination that makes the output useless for anything production-grade.

So you close the terminal, go back to ElevenLabs or Play.ht, and you tell yourself this is just the cost of quality voice AI. Maybe next year. -

Mass Hallucination: Mass Hallucination

Mass Hallucination

(Makeshift Swahili)

Out Now

DL | Cassette

No need for lyrical analysis. No need for thematic detail. No need for anything but three swipes of the spinal cord. Three internal scrapings from the new release by Mass Hallucination. By Ryan-Lewis Walker.

The crack of the neck. And the crack of everything else.

Composed of Chris R and Tom Shuff of Coded Marking, former Cattle bassist Tom Goodall and Will from Self-Immolation Music, and released via Glasgow’s Makeshift Swahili, this eponymous album follows the band’s debut demo from August last year.

This time, although still dedicated to the twitchy contortions of all things that slide in and out of the parameters according to noise, hardcore and post-punk – a sound entirely built to blow nowhere except out, they wanted to produce something that was still just as raw, but a little more polished and song-structured.

First up, Lacerated leans into the flank with a finger instead of knives, yet succeeds in introducing a hole just as deep as any common blade. Bookended with metallic aches of feedback scraping across the dead air in a decaying chamber, its suppurated complexion surges and squeals with cannibalistic action.

It’s soon followed by Human Figures. Cracking apart like a blowtorch to the bosom of a leather settee, it erupts from the shackles in a skin-soldering rush of golem–goading power. Thuds of molten bass, collisions of feral guitars, vocals howling into the abyss, all swallowed by the concave of density and despair.

Finally, in Dragging A Rope we hear a near-perfect synergy of those Negative Approach and Killing Joke influences bleeding through. A tag-team of bass and drums punctuates the battleground whilst humanoid fractures of manic guitars shave with shards of glass elsewhere, ending on an explosion akin to sticks of dynamite strapped to the base of a brutalist building at the interchange of West Yorkshire and Hell.

No need for lyrical analysis. No need for thematic detail. No need for anything.

The crack of the…

~

Mass Hallucination | Bandcamp

Makeshift Swahili | Bandcamp

Ryan-Lewis Walker | Louder Than War -

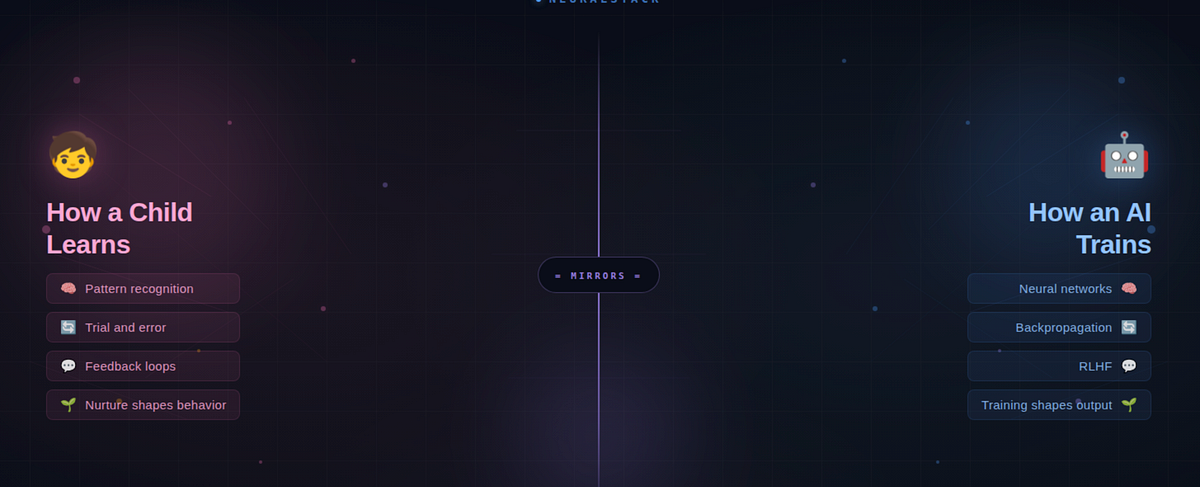

The Parenting Mirror: What Learning AI is Teaching Me

The Parenting Mirror: What Learning AI is Teaching Me Vikas 5 min read · Just now Just now — Listen Share Maybe the best engineers aren’t just good at code. Maybe they’re good at patience. Press enter or click to view image in full size Source : Claude-Generated Punish a child every time they fail, and they stop trying. Praise them for everything, and they never grow. Now replace ‘child’ with ‘AI model.’ The sentence still holds. That’s not a coincidence — it’s the same underlying principle. And once you see it, you can’t ignore it. I started learning AI to build stuff and make my life easy. But somewhere along the way, AI taught me something about raising kids. And watching my kids taught me why AI works the way it does. Let me explain. A Toddler and a Neural Network Walk Into a Dog Park When your toddler points at a Labrador and says ‘dog,’ then points at a German Shepherd and says ‘dog’ again — that’s pattern recognition. Nobody handed them a rulebook. Nobody said ‘four legs + fur + tail = dog.’ They figured it out from examples. Lots of examples. That’s exactly how a neural network learns. You feed it thousands of labeled images — ‘this is a dog,’ ‘this is not a dog’ — and it builds its own internal rulebook. No programmer writes the rules explicitly. The model discovers the patterns from data, the same way your kid discovered ‘dog’ from a hundred visits to the park. And when your toddler points at a cat and confidently says ‘dog’? That’s not failure. That’s learning in progress. They saw four legs, fur, a tail — and made their best guess. In machine learning, this is called backpropagation — a technical term for a simple idea: tell the system where it went wrong, so it can adjust. It’s the machine equivalent of a patient parent saying, ‘Close! That’s actually a cat. See the pointy ears?’ But here’s where it gets deeper. It’s not just that you give feedback. It’s how you give it. The Parenting Mirror Think about three types of parents. Each one maps directly to a style of AI training — and each produces a very different outcome. 🚫 The Punisher: Correct Every Mistake Harshly With a child: They stop exploring. They become anxious. They only give ‘safe’ answers to avoid getting yelled at. Creativity dies. Survival mode kicks in. With AI: This is over-alignment. The model gets penalized so aggressively for any wrong output that it refuses to answer even harmless questions. It’s the AI equivalent of a kid who won’t raise their hand in class because they got scolded once. The model isn’t safe — it’s paralyzed. You’ve probably experienced this. You ask an AI a perfectly reasonable question and get: ‘I’m sorry, I can’t help with that.’ That’s an over-punished model. It learned that saying nothing is safer than risking a mistake. 🧸 The Coddler: Never Say No With a child: They grow up overconfident and fragile. They think every answer they give is correct. They never develop resilience because they’ve never encountered real feedback. With AI: This is the hallucination problem. A model trained with weak or absent correction generates confident, polished, beautifully written responses — that are completely wrong. It doesn’t know it’s wrong because nobody ever told it. Just like a child who was never corrected, it mistakes confidence for competence. 🌱 The Nurturer: Boundaries With Room to Grow With a child: They feel safe enough to fail. They learn from mistakes without fear. They develop judgment,

-

AI is dumping 150-page complaints on finance ombud’s desk

What used to take a paragraph or two now comes in book-sized tomes, often with fake legal cases as references. The idea that artificial intelligence (AI) will ease workloads is certainly not happening at the National Financial Ombud (NFO). Complaints that previously would take a paragraph or two are now – with the aid of AI – coming in 150-page tomes, often with fake legal cases as references, says Reana Steyn, NFO CEO and head ombud, speaking at the Conduct Risk Conference in Midrand on Wednesday (11 March). Where the complaints adjudicators would previously look at a paragraph and make a call on whether to investigate further, they are now required to review complaints that sometimes run to 150 or even 200 pages. It’s clear these are drafted using AI. ‘We’re supposed to be a speedy and efficient dispute resolution body. The institution against which the complaint is made is also using AI to reply,’ says Steyn, adding to the ombud’s already stretched workload. Steyn says the ombud takes pain to ensure responses are crafted by humans, not AI. ALSO READ: The frightening AI times we live in False facts being dished up Some of the case law cited in these AI-generated complaints to the NFO are completely fake. This is a problem that has already surfaced on multiple occasions in SA courts (see below). Fake or not, these complaints and the legal citations have to be researched and investigated by the NFO. The ombud says it must strike a balance between consumer advocacy and impartial resolution. About half the credit-related complaints were decided in favour of consumers in 2024. The figure was 25% for life insurance, 12% for non-life insurance and 21% for banking services. The NFO’s 2024 report – released in 2025 – shows nearly 36 000 complaints received between March and December of that year, with 28 000 resolved in the same period. Money recovered The ombud recovered R328 million for consumers, with an average of 115 days to resolve a case. In cases involving the banks, the time to resolve a case is about 52 days. ‘Over the past year, the organisation recovered a staggering R328.5 million on behalf of consumers, a figure that speaks volumes about the power of independent mediation and the tangible benefits the NFO delivers,’ says the 2024 annual report. The NFO was created in 2024 to amalgamate four predecessor ombuds: credit, banking services, long-term insurance and short-term insurance. This makes it easier for consumers to lodge complaints under a single umbrella organisation. The Pension Funds Adjudicator and Financial Advisory and Intermediary Services (Fais) ombud continue to operate independently. ALSO READ: US cybersecurity company invests in SA – but who will benefit? Stats … and additional pressures Steyn says the NFO receives tens of thousands of calls and emails a month. When complaints don’t go the way of the consumer, many take to social media to relitigate their complaints. About 5% of complaints at the NFO come from people over 85, many of whom are less comfortable in a digital environment. The NFO has a policy for vulnerable customers, offering extra duty of care to those in poor health, or suffering financial or other hardships. The Financial Sector Conduct Authority (FSCA) has issued multiple warnings about AI-generated fraud. Scammers exploit AI to create convincing impersonations that lend false legitimacy to unauthorised investment schemes, fake trading platforms, and solicitation of funds via apps like WhatsApp, Telegram, and Facebook. Fake case law dreamed up by AI In one recent case before the KwaZulu-Natal High Court, Judge Elsje-Marié Bezuidenhout issued a stinging rebuke against law

-

Google overhauls its Maps app, adding in more AI features to help people get around

By MICHAEL LIEDTKE, AP Technology Writer

Google Maps will depend more heavily on artificial intelligence to help people figure out where they want to go and the best way to get there as part of a major redesign unveiled on Thursday.

The overhaul driven by Google’s Gemini technology will introduce two AI features into a digital mapping service used by more than 2 billion people worldwide.

One tool called Ask Maps will expand upon conversational abilities that Google brought to the service last November, giving suggestions to users looking for things such as nearby places to charge their devices, cafes with short lines or a detailed itinerary for a road trip involving several stops and excursions.

Gemini’s recommendations will draw upon a database spanning more than 300 million places and reviews from more than 500 million contributors that have been accumulated since Google Maps’ debut more than 20 years ago. Google executives declined to answer a question about whether the company eventually plans to sell ads to boost businesses’ chances of being displayed in Ask Maps’ recommendations. Ask Maps initially will be available on Google Maps’ mobile app for iPhones and Android software in the U.S. and India, before expanding to personal computers and other countries.

In what Google executives are billing as the biggest change to the maps’ driving directions, Gemini has also created a new tool dubbed Immersive Navigation that will present a three-dimensional perspective designed to give users a better grasp of where they are at any moment in time. The 3D renderings created by Gemini will include landmarks such as notable buildings, medians in the roads and other aspects of the terrain that drivers are seeing around them as they drive to help them get their bearings more quickly.

Google believes its AI guardrails are now strong enough to prevent the Gemini technology underlying Immersive Navigation from fabricating bogus places to go, a malfunction known within the industry as a ‘hallucination.’

Immersive Navigation is also supposed to help Google Maps more clearly explain the pros and cons of different driving routes to the same recommendation, as well as point to the best places to park once a user arrives at a designated destination. The new AI-powered navigation will only be available in the U.S. initially, on Google Maps’ mobile app for the iPhone and Android, as well as cars equipped with options to activate CarPlay and Android Auto.

The increased reliance on AI in Google Maps follows the company’s introduction of more Gemini technology to make two of its other most popular products — Gmail and the Chrome web browser — more proactive and helpful to their billions of users. The expansion underscores Google’s confidence in the Gemini 3 model that the Mountain View, California, company released late last year as part of an intensifying battle for AI supremacy with up-and-coming rivals such as OpenAI and Anthropic. -

The AI data gold rush is here and Corporate America is ready

The era of free AI training data is over. Reddit $RDDT charges millions for API access. The New York Times sued. Publishers are blocking scrapers. Even if AI companies could still vacuum up the public internet, they’re running into a bigger problem: they need different kinds of data entirely for the next leap in abilities. Large language models were built by scraping text and images from the web. But as AI systems move beyond chatbots, they need training data that was never publicly available in the first place. Data that’s locked away, or scattered, or doesn’t even exist yet. New markets are emerging to unlock these sources. Here are three. Your digital exhaust, monetized Most people think of personal data as Social Security numbers and health records. But nearly everything you do online generates data that platforms collect and use — your Spotify $SPOT listening history, your email patterns, the documents you write in Google $GOOGL Docs, your conversations with ChatGPT. When you download your Instagram data, for example, the company doesn’t just give you your photos. You get everything Instagram has inferred about you based on your browsing behavior: hundreds of data points ranging from innocuous labels like “interested in nature” to psychological assessments like whether you have depression. None of it is publicly scrapeable. All of it is legally yours. “If you park your car in a parking lot, the parking lot doesn’t own your car,” says Anna Kazlauskas, CEO of Vana, a company building infrastructure for individuals to contribute their platform data to AI training. The same principle applies to data: you own it, even if it lives on someone else’s server. The scale is massive. A version of Common Crawl, the dataset that trained Meta $META’s Llama 3, contains about 15 trillion words scraped from the public internet. If 100 million people each contributed data exports from just five platforms, that would yield 450 trillion tokens, 30 times larger than any existing dataset. This type of data could unlock personalized AI that understands your music taste, or health models trained on real sleep and fitness data, all impossible with scraped web content. Kazlauskas says that paying people for data only they can provide could also reshape the broader AI debate. “A lot of the fear around AI comes from the lack of proper attribution and economics,” Kazlauskas says. “If you teach AI how to do your job, you should actually own that AI model.” Mapping the physical world Text models could train on scraped web data. But the next generation of AI needs accurate, consistent information about the physical world. Robots navigating cities, autonomous vehicles, and augmented reality all need high-fidelity digital maps to make decisions against. The problem is that existing aerial data is fragmented. It comes from various contractors with different sensors at different accuracies, making it nearly impossible to train reliable geospatial models. Satellite imagery, while covering most of the planet, lacks the resolution. The data layer AI companies need simply doesn’t exist yet. Spexi is trying to build it using gig workers and drones. The company has more than 10,000 pilots fly standardized missions at 80 meters altitude. In the past 18 months, they’ve covered more than 6 million acres across 300 North American cities at higher resolution than satellites or traditional aerial imagery, says Bill Lakeland, Spexi’s CEO. Spexi is working with companies like Niantic to train large geospatial models for augmented reality and robotics. Unlike language models, these need constant updating as buildings rise and roads change. It’s a version of the same problem plaguing ChatGPT and other LLMs —

-

MIT scientists develop AI system to improve robot planning

7 Robots are poised to become significantly more adept at navigating and interacting with the world, thanks to a new hybrid AI framework developed by researchers at MIT. This isn’t about building robots that *look* more human. it’s about giving them the cognitive tools to reliably perform complex tasks in unpredictable environments. The Challenge of Complex Visual Tasks Traditionally, robotic planning has struggled with the nuances of real-world vision. Robots often have difficulty interpreting images, predicting the outcomes of their actions, and adapting to unexpected changes. Existing methods often achieve success rates around 30 percent, limiting their usefulness in dynamic settings. The new MIT framework aims to change that. How the Hybrid AI System Works The core innovation lies in combining the strengths of generative AI and classical planning software. The system utilizes two specialized vision-language models. The first analyzes an image, providing a description of the environment and simulating potential actions. This simulation is then translated into a formal programming language by the second model, which established planning software can understand. This two-step process allows robots to ‘think through’ a task before executing it, significantly increasing the likelihood of success. The system doesn’t just react to what it sees; it anticipates and plans. Impressive Results: A 70% Success Rate Testing has demonstrated a substantial improvement over existing techniques. The MIT framework achieved an average success rate of approximately 70 percent, more than doubling the performance of many baseline methods. Crucially, this performance remained consistent even in unfamiliar scenarios, highlighting the system’s adaptability. Did you realize? Generative AI is not just for creating images and text; it’s now being used to design and optimize robotic systems themselves, as demonstrated by recent work at MIT improving robot designs for jumping. Real-World Applications on the Horizon The potential applications of this technology are vast. The method could support advancements in robot navigation, making warehouse automation and delivery services more efficient. It similarly has implications for autonomous driving, enabling vehicles to better understand and respond to complex traffic situations. Collaborative robotic assembly systems, where robots work alongside humans, could also benefit from this improved planning capability. the framework could be applied to scenarios requiring intricate manipulation, such as surgical robotics or delicate manufacturing processes. Addressing the ‘Hallucination’ Problem While promising, the researchers acknowledge the need to address potential issues with AI model ‘hallucinations’ – instances where the AI generates incorrect or nonsensical information. Continued development will focus on mitigating these errors and ensuring the reliability of the system in critical applications. Future Trends: Towards More Autonomous and Adaptive Robots This research represents a significant step towards more autonomous and adaptive robots. People can expect to witness further integration of generative AI into robotic systems, leading to machines that can learn from experience, generalize to new environments, and perform increasingly complex tasks with minimal human intervention. The convergence of AI, robotics, and computer vision is also driving innovation in areas like speech-to-reality systems, where robots can build objects based on spoken commands. This suggests a future where humans can interact with robots in a more natural and intuitive way. FAQ Q: What is a ‘hybrid AI framework’? A: It’s a system that combines different AI techniques – in this case, generative AI and classical planning – to leverage their individual strengths. Q: How does this improve robot performance? A: By allowing robots to simulate actions and plan ahead, rather than simply reacting to their environment. Q: What are the potential applications of this technology? A: Robot navigation, autonomous driving, collaborative assembly, and more. Q: What is an AI ‘hallucination’? A: It’s when an AI

-

₹131 Crore For Men, ₹51 Crore For Women: The Gender Pay Gap That Exposes Indian Cricket’s Hollow Platitudes

BCCI’s corridors are thick with the musk scent of a grand, masculine triumph. Fresh off a T20 World Cup conquest in March 2026, the Board has anointed its gladiators with a staggering ₹131 crore. It is a sum that does not merely talk; it roars. | Credits: Twitter

BCCI’s corridors are thick with the musk scent of a grand, masculine triumph. Fresh off a T20 World Cup conquest in March 2026, the Board has anointed its gladiators with a staggering ₹131 crore. It is a sum that does not merely talk; it roars. Yet, cast your eyes back to the women’s ODI victory just a season ago. Their reward? A relatively paltrier ₹51 crore. The arithmetic of our national pride, it seems, remains stubbornly anchored in a primitive imbalance.

The timing is a masterclass in irony, a jagged pill swallowed just one day after the nation’s airwaves were choked with the saccharine platitudes of International Women’s Day. We toasted the “Shakti” of our daughters on a Sunday, only to remind them on a Monday that their sweat, though just as salty, fetches less than half the market price of a man’s.

This is the “tilt”—the subtle, sickening lean of the playing field. We saw it in 2021, when the Tokyo sun set on a bronze-winning men’s hockey team and a fourth-place women’s squad. The Haryana government’s ledger was cold: ₹2.5 crore for the men, a mere ₹50 lakh for the women. The logic of the medal is a convenient shield for a deeper, older prejudice. It is the same prejudice that keeps the high altars of sports governance—the BCCI’s inner sanctum, the executive suites of our federations—almost barren of the female spirit.

Where is the fire from the top? We look to PT Usha, the Payyoli Express who now holds the reins of the IOA, and Mary Kom, the pugilist who knows the weight of a gloved fist. They must do more than inhabit these seats. They should recall the steel of Dipika Pallikal, who boycotted the squash nationals for years over prize money gaps, and the harrowing courage of wrestlers like Vinesh Phogat and Sakshi Malik, who took to the streets to challenge the very foundations of institutional apathy. Ultimately, match fees are the crumbs; the hoard is in the rewards, the retainerships, and the raw, unchecked power of the vote. We are a hoary civilisation, old as the dust of the Vedas, yet we behave like adolescents in the face of true equality. We pay a theatrical lip service to gender justice, yet we allow these financial gulfs to widen. One cannot, with a straight face, castigate the Taliban for their mediaeval erasure of women while maintaining a fiscal apartheid in our own stadiums. To claim India is Viksit—developed—while the rewards of the arena are still dictated by the presence or absence of a Y-chromosome is a hallucination. If we are to be a great power, we must first learn that greatness is not measured in the thickness of a chequebook but in the levelness of the ground upon which we stand.