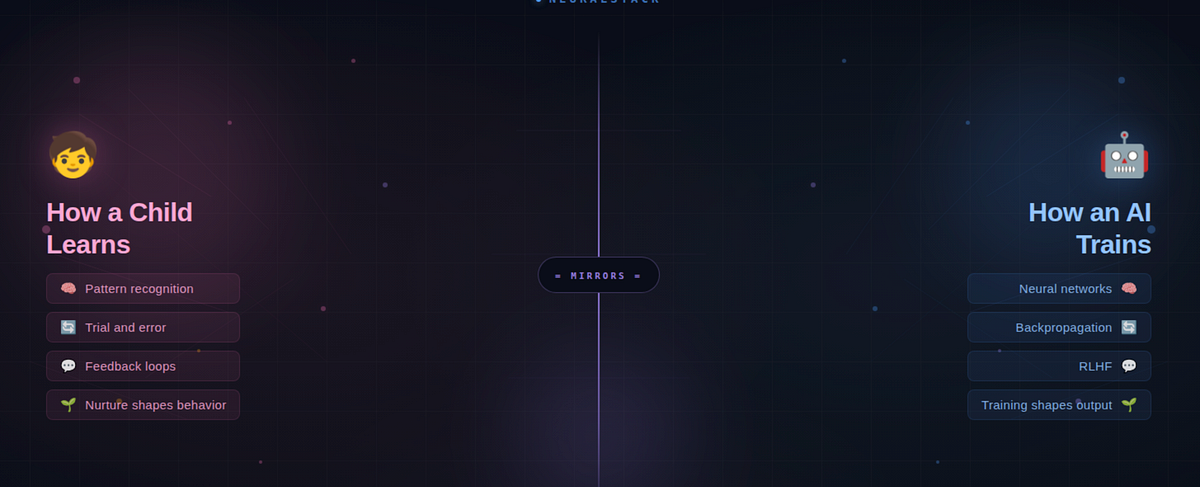

The Parenting Mirror: What Learning AI is Teaching Me Vikas 5 min read · Just now Just now — Listen Share Maybe the best engineers aren’t just good at code. Maybe they’re good at patience. Press enter or click to view image in full size Source : Claude-Generated Punish a child every time they fail, and they stop trying. Praise them for everything, and they never grow. Now replace ‘child’ with ‘AI model.’ The sentence still holds. That’s not a coincidence — it’s the same underlying principle. And once you see it, you can’t ignore it. I started learning AI to build stuff and make my life easy. But somewhere along the way, AI taught me something about raising kids. And watching my kids taught me why AI works the way it does. Let me explain. A Toddler and a Neural Network Walk Into a Dog Park When your toddler points at a Labrador and says ‘dog,’ then points at a German Shepherd and says ‘dog’ again — that’s pattern recognition. Nobody handed them a rulebook. Nobody said ‘four legs + fur + tail = dog.’ They figured it out from examples. Lots of examples. That’s exactly how a neural network learns. You feed it thousands of labeled images — ‘this is a dog,’ ‘this is not a dog’ — and it builds its own internal rulebook. No programmer writes the rules explicitly. The model discovers the patterns from data, the same way your kid discovered ‘dog’ from a hundred visits to the park. And when your toddler points at a cat and confidently says ‘dog’? That’s not failure. That’s learning in progress. They saw four legs, fur, a tail — and made their best guess. In machine learning, this is called backpropagation — a technical term for a simple idea: tell the system where it went wrong, so it can adjust. It’s the machine equivalent of a patient parent saying, ‘Close! That’s actually a cat. See the pointy ears?’ But here’s where it gets deeper. It’s not just that you give feedback. It’s how you give it. The Parenting Mirror Think about three types of parents. Each one maps directly to a style of AI training — and each produces a very different outcome. 🚫 The Punisher: Correct Every Mistake Harshly With a child: They stop exploring. They become anxious. They only give ‘safe’ answers to avoid getting yelled at. Creativity dies. Survival mode kicks in. With AI: This is over-alignment. The model gets penalized so aggressively for any wrong output that it refuses to answer even harmless questions. It’s the AI equivalent of a kid who won’t raise their hand in class because they got scolded once. The model isn’t safe — it’s paralyzed. You’ve probably experienced this. You ask an AI a perfectly reasonable question and get: ‘I’m sorry, I can’t help with that.’ That’s an over-punished model. It learned that saying nothing is safer than risking a mistake. 🧸 The Coddler: Never Say No With a child: They grow up overconfident and fragile. They think every answer they give is correct. They never develop resilience because they’ve never encountered real feedback. With AI: This is the hallucination problem. A model trained with weak or absent correction generates confident, polished, beautifully written responses — that are completely wrong. It doesn’t know it’s wrong because nobody ever told it. Just like a child who was never corrected, it mistakes confidence for competence. 🌱 The Nurturer: Boundaries With Room to Grow With a child: They feel safe enough to fail. They learn from mistakes without fear. They develop judgment,

The Parenting Mirror: What Learning AI is Teaching Me

Leave a Reply